The True History That Shaped The US In Modern Warfare

For the casual observer in 2026, it can feel almost impossible to make sense of what is happening in the world. America is suddenly everywhere again in the headlines, Iran is under attack, Trump’s decisions look erratic and extreme, and social media is flooded with outrage, propaganda, half-truths, and tribal certainty from every direction. But none of this appeared out of nowhere. To understand why the United States keeps returning to the centre of global conflict, you have to look beyond the daily news cycle and back across decades of American domestic and foreign policy, economic sanctions, military expansion, media conditioning, and the hardening of right-wing political identity at home. This article is a plain summary of that longer history and of the geopolitical interventions through which the United States has shaped events on foreign soil for more than half a century.

The popular version of American war history in World War II usually starts and ends with Pearl Harbor, D-Day, and the atomic bomb. That version is not wrong, but it is incomplete. The fuller story is that the war did not just make America a military victor. It transformed the United States into the dominant postwar economic and strategic power, while also leaving behind a series of myths that still confuse people today.

One of the biggest misconceptions is that America emerged from the war simply because it had the bomb.

Nuclear weapons mattered enormously, but they were only part of the picture. The United States came out of the war with its industrial base intact, its homeland largely untouched, and its manufacturing power unmatched. While much of Europe and Asia had been physically devastated, America had spent the war expanding production, building ships, planes, vehicles, ammunition, and infrastructure at an industrial scale the world had never seen. That economic position mattered just as much as battlefield victory.

Britain had to “pay America back after World War II”

Wartime Lend-Lease aid used during the war was not treated like an ordinary commercial debt that Britain later had to settle in full. What Britain did repay was a large postwar American loan, agreed after the war ended, to help keep the UK financially afloat during the brutal transition from wartime survival to peacetime rebuilding. That loan was tied to Britain’s collapsing export base, shortage of dollars, and need to keep importing essentials while rebuilding the economy.

Americas Bomb

Hiroshima was destroyed by an atomic fission bomb built through the Manhattan Project during the war itself. The later hydrogen bomb came years afterward. Where the German scientists mattered most was in rockets, missiles, and later aerospace development.

That distinction matters because it changes how we understand the arms race.

America did not become powerful because it looted one secret and suddenly leapt ahead. It had already mobilised science, industry, logistics, and military planning at an unprecedented scale. After the war, both the United States and the Soviet Union then absorbed German technical expertise, especially in rocketry, which helped accelerate missile development and deepen the Cold War. So the postwar seizure of scientists was important, but it was more about what came next than about what ended the war in Japan.

A further overlooked truth is how much of America’s wartime strength came from systems rather than single moments. The mythology focuses on iconic events, but wars at that scale are won through shipping lanes, fuel, steel, engines, ports, codebreaking, rail, food supply, and the ability to replace losses faster than the enemy can inflict them. America’s war machine was not just brave soldiers and decisive generals. It was assembly lines, shipyards, oil, and mathematics. That is less dramatic than the movie version, but far closer to the economics reality of war sunk all but America.

There is also a darker side to America’s war rise that often gets softened in popular memory. The atomic bombings were not just technological triumphs. They marked the beginning of a world in which industrial warfare and mass civilian destruction became inseparable from global power. After Hiroshima and Nagasaki, the United States was not merely victorious. It was the first nation to demonstrate nuclear warfare in practice, and that permanently altered the structure of international politics. From then on, power was no longer measured only in armies and fleets, but in the ability to destroy entire cities.

That shift helped define the postwar order, but it did not operate in isolation.

America became a superpower because it combined military reach, financial strength, industrial dominance, and technological leadership. The bomb amplified that position. At the same time, the Soviet Union emerged from the war as the other great power, not because it was economically stronger, but because it had absorbed staggering losses, crushed Nazi Germany on the Eastern Front, and expanded its military and political reach across Eastern Europe. The Cold War was born from that dual reality.

The war’s aftermath is that the peace did not feel financially peaceful at all. Britain, supposedly on the winning side, came out nearly bankrupt and dependent on American financing. Europe was shattered. The Soviet Union was victorious but devastated. The United States, by contrast, was in a position to lend, supply, build, and dictate terms far more than any other Western power. That imbalance explains a great deal about the world that followed.

On 26 July 1945, the U.S., Britain, and China issued the Potsdam Declaration, demanding Japan’s unconditional surrender and warning that the alternative was “prompt and utter destruction.” When Japan did not accept those terms, the U.S. dropped the bomb on Hiroshima on 6 August 1945 and Nagasaki on 9 August 1945.

The atomic bombings became the war’s final, devastating act, forcing the world to confront a new reality in which a single weapon could destroy an entire city and permanently reshape global power. They helped bring World War II to its end, but they also opened the nuclear age, where peace would increasingly rest not on trust, but on fear of total destruction.

Hiroshima and Nagasaki were civilian-heavy cities, and by modern standards the deliberate destruction of entire urban populations would be extremely difficult to defend legally or morally. In 1945, however, the laws of war were less developed, and the United States justified the bombings as part of its military campaign against Japan. So the honest summary is that many people see the atomic bombings as morally indistinguishable from a war crime, but there was no definitive international war-crimes ruling against the U.S. for them at the time.

After defeat, Japan came under U.S.-led occupation, was demilitarized, and adopted a new constitution with Article 9, which renounced war and became a foundation of Japan’s postwar pacifist identity. At the same time, Hiroshima and Nagasaki became central to Japan’s public memory, antinuclear stance, and diplomatic identity as the only country to have suffered atomic bombings in war. Economically, Japan went on to rebuild with extraordinary speed and became one of the world’s major industrial powers, but politically and psychologically the bombings left a lasting mark: modern Japan’s caution about war, deep sensitivity to nuclear weapons, and long postwar dependence on the U.S. security umbrella all grew in part from that experience.

So the real American World War II story is not one of battlefield heroics or secret weapons. It is the story of how a continental industrial power used war mobilisation to become the central force in the postwar world. It is also how public memory compresses complicated events into neat myths that nuclear weapons alone made America supreme. The truth is more layered, more structural, and in many ways more unsettling.

The Breadwinner Toll of WW2

World War II was not only a human catastrophe but a massive labour and household-income shock, because the war killed an estimated 35 to 60 million people overall, with 40 to 50 million often used as the standard summary figure, and those losses fell heavily on working-age men in countries that fought for longer, were occupied, or saw fighting on their own soil. That meant millions of families lost breadwinners, while economies lost skilled workers, farmers, miners, factory hands, and future taxpayers at the same time that homes, transport networks, and industry were being destroyed.

The United States, by contrast, suffered a far smaller direct human and physical shock at home: the U.S. military death toll was just over 400,000 service members, but economically it was far less devastating than the losses absorbed by countries such as Britain, the Soviet Union, Poland, Germany, and China, all of which carried heavier population losses and, in many cases, physical destruction across their own territory.

Jewish populations in America

America emerged from the war with grief and military losses, however nowhere near the same scale of demographic scarring and domestic ruin that crippled much of Europe and Asia; that difference mattered enormously in explaining why the U.S. entered the postwar era in a much stronger economic position than many of its allies.

After World War II, the Jewish postwar migration story was driven by a concrete refugee crisis: from 1945 to 1952, more than 250,000 Jewish displaced persons lived in camps and urban centers in Germany, Austria, and Italy because many survivors could not safely return to Eastern Europe after the Holocaust, their communities had been destroyed, and antisemitism remained intense.

In the United States, Congress passed the Displaced Persons Act of 1948, later improved in 1950, and by 1952 more than 80,000 Jewish Displaced Persons had immigrated to the U.S. under that framework. At the same time, Palestine became the most desired destination for many survivors: from 1945 to 1948, growing numbers of Jewish people chose British-controlled Palestine, and the Brihah movement moved more than 100,000 Jews there despite British restrictions; after Israel was established on 14 May 1948, Jewish survivor immigration accelerated further, and by 1952 about 136,000 Jewish displaced had gone to Israel, with another 20,000 resettling in countries such as Canada and South Africa.

It is important to understand that before Israel was created in 1948, most Jews lived across the wider diaspora in Europe, the Middle East, North Africa, and later the Americas, with only a relatively small Jewish population living in Palestine itself. That is why many early Israeli leaders and families had surnames, first names, and cultural backgrounds that were clearly European, especially Ashkenazi, because many had immigrated from countries such as Poland, Russia, Germany, and elsewhere.

Jewish Americans make up only about 2.4% of U.S. adults, yet they were disproportionately important in the early growth of sectors like Hollywood and some Wall Street firms because earlier immigrant waves, urban concentration, high educational attainment, and exclusion from parts of the old Protestant establishment pushed many talented Jewish families into newer, faster-growing industries where outsiders could still build influence. That helps explain why a number of famous studios and firms had Jewish founders or leading Jewish executives — for example, Goldman Sachs traces its origins to Marcus Goldman, a Jewish immigrant, and Hollywood’s early development involved many Jewish immigrant entrepreneurs.

Palestine, Gaza and Hamas

On the introduction of Israel to the middle east the truth of the matter is that the Palestinians were not consulted in any meaningful democratic sense before Britain backed the establishment of a Jewish national home inside of Palestine.

The 1917 Balfour Declaration was made by Britain without the consent of the Arab majority living there, and while Britain later administered Palestine under the Mandate system, Palestinian Arab opposition was repeatedly ignored rather than treated as decisive. By the time the issue reached the UN in 1947, Palestinians and Arab leaders did present their objections, but they still had no effective power to block partition.

Britain claimed the authority through wartime conquest and a League of Nations mandate, but it had no genuine democratic right to decide Palestine’s future without the consent of the people already living there. The reality was that the Holocaust produced a large, stateless Jewish refugee population; while the booming U.S. absorbed a substantial share, Israel became the single biggest political and demographic destination for many survivors in the immediate postwar years and the historic homeland garnered staunch support from the U.S.

Since Israel’s establishment in 1948, and especially since Israel occupied Gaza in 1967, the relationship between Israeli authorities and Palestinians in Gaza has been defined by conflict, displacement, military control, repeated uprisings, and recurring war. Israel occupied Gaza for decades after the Six-Day War, later withdrew its settlements and permanent ground presence in 2005, but retained control over Gaza’s borders, airspace, and much of its movement and access, while cycles of blockade, rocket fire, airstrikes, and ground offensives deepened hostility and humanitarian crisis. In plain terms, the relationship has not been one of coexistence, but of long-running domination, resistance, separation, and repeated violence.

Hamas was founded in 1987 at the start of the First Intifada. It grew out of the Palestinian branch of the Muslim Brotherhood, mainly in Gaza, and was created as an Islamist Palestinian movement opposed to Israeli occupation and separate from the secular PLO/Fatah leadership. Over time it became both a political and armed organization, later winning the 2006 Palestinian legislative elections and taking control of Gaza in 2007. The United States and a number of other governments designate Hamas as a terrorist organization because of its attacks on civilians and armed operations.

Who’s homeland?

The commonly held Israeli view is “Israel predates Palestine” are not usually making a narrow modern legal point, they are making a civilizational one. They are saying the Jewish people had an ancient kingdom, religion, language, temples, and identity tied to that land long before there was a modern Palestinian national movement, and that exile did not erase that connection. In their view, modern Israel is therefore not a brand-new colonial invention but a national return to an ancestral homeland.

The reason the argument is so powerful to them is that it blends history, religion, identity, persecution, and survival into one story: “we were there first, we were expelled and scattered, and we came back.” Where the dispute begins is that Palestinians hear this not as ancient memory but as a justification for modern dispossession.

In Jewish belief, the idea is that God made a covenant with the Jewish people and promised the Land of Israel to them as their ancestral and sacred homeland.

From the river of Egypt to the great river, the Euphrates

On the broadest modern reading if “river of Egypt” is taken to mean the Nile, it would imply parts of Egypt, Israel/Palestine, Jordan, Syria, Iraq, and up to Turkey because the Euphrates rises in Turkey and flows through Syria and Iraq.

The United Nations

The United Nations was created in 1945 after the devastation of two world wars as an attempt to stop humanity from repeating the same catastrophic failure of diplomacy. Its core purpose was to give countries a permanent structure where disputes could be argued, mediated, pressured, and negotiated before they escalated into full-scale war. In principle, the UN is built on rules against aggression, support for sovereignty, human rights, and collective security, with bodies like the General Assembly and Security Council meant to keep international dialogue alive even between rivals. In reality, it is a flawed system because the most powerful states were given veto power, which often blocks action when their own interests are at stake. So the UN is not a guarantee of peace, but rather the world’s imperfect attempt to put diplomacy, law, and negotiation in front of war.

The Nuclear Threat Post WW2

What emerged was a system of nuclear deterrence: once multiple states had secure retaliatory capability, the logic of mutually assured destruction made direct great-power war vastly more dangerous because any nuclear first use could trigger catastrophic counterattack and potentially annihilate both sides. That fear did help push major powers toward caution, crisis management, arms control, and diplomacy, especially during and after the Cold War. But it did not create real trust or guaranteed peace; it created a tense “balance of terror” in which peace was preserved partly by the shared understanding that nuclear war would be disastrous for all, while the risks of miscalculation, accidents, escalation, and proliferation never disappeared. So the nuclear threat has often restrained direct war between major powers and elevated diplomacy, but it has done so through fear of catastrophe, not through moral consensus or stable harmony.

The Middle East

The United States did not take control of the Middle East through one single invasion or formal empire. It built power gradually through oil-for-security relationships, military access agreements, anti-communist alliances, arms sales, coups and interventions in some cases, and long-term partnerships with friendly regimes. A symbolic early turning point was Roosevelt’s 1945 meeting with King Ibn Saud, which helped lay the foundation for the long U.S.-Saudi relationship, followed by early American access to Dhahran in Saudi Arabia.

From there, Washington built a regional military network through allied states rather than direct colonial rule. Bahrain became central to U.S. naval power and now hosts the 5th Fleet, giving Washington reach over key maritime chokepoints such as the Strait of Hormuz, Suez Canal, and Bab el-Mandeb. Qatar became a major air-command hub through Al Udeid Air Base, while the UAE hosts important U.S. air-power infrastructure at Al Dhafra. Together, these bases form the backbone of a long-term American military architecture across the Gulf.

Oil was always central, but the broader goal was not simply to “take the oil.” It was to secure the flow of oil, protect shipping lanes, prevent rival powers from dominating the region, and keep Middle Eastern energy inside a Western-led financial and security order. That is why the enduring alliances still revolve around Saudi Arabia, Bahrain, Qatar, and the UAE, supported by weapons deals, regime protection, and permanent force presence. In simple terms, America gained control by becoming the region’s security guarantor and using that role to anchor the Middle East inside a U.S.-led strategic system that still operates today.

In the modern era, Israel is very much part of the wider U.S. regional order, but still not the main platform through which the U.S. physically secures Gulf oil. Its role is more as a high-end military partner, intelligence ally, and anti-Iran anchor, while countries like Saudi Arabia, Bahrain, Qatar, Kuwait, and the UAE remain the core basing and energy-security states. More recently, the Abraham Accords helped bring Israel into a more open strategic relationship with some Gulf states, which strengthened the broader U.S.-aligned bloc.

The Post War Petro Dollar Economy

Post World War II, the US emerged with huge industrial capacity, an intact domestic economy, and a consumer boom that drove mass demand for cars, suburbs, appliances, highways, and therefore far more energy, especially oil. Over the following decades, as global trade liberalised and firms chased lower costs, a large share of manufacturing shifted out of the US into lower-cost production hubs, with China becoming the standout winner by plugging into global value chains at massive scale.

After the collapse of Bretton Woods and the oil shocks of the 1970s, the US deepened strategic ties with major oil producers, especially Saudi Arabia, in a system that reinforced the use of the US dollar in global oil trade and helped sustain global demand for dollar assets. The result was that America increasingly benefited not only from making things, but from controlling the financial system, the reserve currency, and the settlement infrastructure around global trade and energy. So broadly speaking, your point is right, but the more accurate framing is that the US did not simply “stop needing production”; it shifted from being overwhelmingly a production-led hegemon to being a combined consumption, finance, energy, and dollar-system hegemon, while offshored manufacturing—especially to China—became a central part of how that model functioned.

In a number of documented Cold War cases, the United States did not secure influence simply through open diplomacy or fair commercial competition. It used covert action, political pressure, intelligence operations, support for friendly elites, and sometimes direct or indirect backing for coups to remove governments seen as hostile to US strategic or corporate interests.

That is not speculation in cases like Iran in 1953 and Guatemala in 1954; official and declassified records show US and CIA involvement in overthrowing governments in both countries. Declassified material and later congressional investigations also show extensive covert US intervention in places such as Chile, where Washington worked to block and then destabilize Allende.

US power was often built through a mix of military dominance, dollar power, alliances, multinational corporate reach, and, in some important cases, covert regime-change operations that helped produce governments more aligned with American geopolitical and economic priorities. In plain English: the record does show that Washington repeatedly undermined unfriendly leadership abroad when it believed strategic control, anti-communism, resources, or regional influence were at stake; but the absolute truth is a pattern of selective intervention, not a single uniform script applied everywhere.

US Power by Coup

Direct, documented evidence of U.S. interventions that aimed at changing or shaping a local government after World War II, these are among the most documented cases. In some, the economic motive is explicit; in others it is mixed with anti-communism, military strategy, or regional control. Weaker or heavily disputed cases rather have been left off the list for brevity.

- Iran (1953) — oil: The documented U.S. record on Iran shows a covertly backed coup in 1953, decades of support for the Shah and his security state, then a post-1979 shift to isolation, economic strangulation, and recurring pressure campaigns designed to weaken the Iranian state and constrain its choices. The exact justifications changed over time — oil, anti-communism, regional strategy, hostages, terrorism, nuclear issues, missiles, human rights — but the underlying pattern is consistent: when Iran’s leadership or policies moved outside a U.S.-acceptable framework, Washington repeatedly used covert action, patronage, or sanctions to shape the outcome in its own favor.

- Guatemala (1954) — bananas / land / corporate interests: The CIA backed the removal of Jacobo Árbenz after land reform hit United Fruit interests; the coup ushered in repression and decades of instability.

- Congo / DRC (1960–65) — minerals / Cold War access: U.S. documents show a covert program aimed at removing Patrice Lumumba and backing more pro-Western leadership during the Congo Crisis.

- Brazil (1964) — anti-left realignment with business interests: Declassified records show Washington prepared to back the anti-Goulart coup, which replaced an elected government with a military dictatorship aligned with U.S. priorities.

- Dominican Republic (1965) — direct military intervention: The U.S. sent troops into Santo Domingo to prevent the constitutionalist side from winning, shaping the outcome of the country’s power struggle in Washington’s favor.

- Indonesia (1965–66) — anti-communist consolidation: Declassified U.S. records show embassy officials had detailed knowledge of the anti-PKI mass killings and supported the army-led destruction of Sukarno’s left base as Suharto rose.

- Chile (1970–73) — copper / corporate / anti-socialist destabilization: U.S. records and Senate findings show covert action to block or undermine Salvador Allende and efforts to promote a coup, ending in Pinochet’s dictatorship.

- South Vietnam (1963) — coup support inside a client state: U.S. records show Washington supported the removal of Ngo Dinh Diem when he became politically inconvenient, deepening U.S. control over the war’s direction.

- Grenada (1983) — direct overthrow by invasion: The U.S. invaded Grenada, toppled the ruling authorities, and installed an interim order more acceptable to Washington; the UN General Assembly condemned the invasion as a violation of international law.

- Nicaragua (1980s) — contra war to unseat Sandinistas: The U.S. armed and backed the Contras in a long campaign to weaken and remove the Sandinista government, contributing to major civilian suffering and economic destruction.

- Panama (1989) — canal / regional control / direct regime removal: The U.S. invaded Panama in Operation Just Cause, removed Manuel Noriega by force, and installed the recognized election winner under U.S. military occupation.

- Cuba (1961 onward) — failed regime-change attempt: The Bay of Pigs was a direct U.S.-backed invasion intended to overthrow Castro; it failed, but it remains one of the clearest documented regime-change attempts.

The conclusion is that the record does show a recurring U.S. pattern after 1945: when a government threatened strategic control, anti-communist doctrine, major corporate interests, access routes, or regional dominance, Washington used covert action, proxy forces, sanctions, or outright invasion to reshape the political outcome.

If we simply look at South Africa’s engagement with the U.S that does not feature in the above list.

The Uppity Africans

South African gold was an important part of the Western financial system that emerged after World War II. Because the Bretton Woods order linked money, gold, and the dollar, South Africa’s huge gold output gave it real strategic value to Britain, Europe, and the wider Western bloc. Gold exports brought apartheid South Africa vital foreign exchange and helped make the regime economically durable for decades, while Western financial systems benefited from the steady flow of South African gold into global markets, especially through London.

The United States did not directly run South Africa’s mines or buy all of its gold outright, but it understood the country’s mineral and monetary importance and, for many years, treated apartheid South Africa as a useful anti-communist ally rather than a moral crisis.

That is why Washington tolerated and worked around the regime for so long, including reaching a 1969 understanding on gold marketing, before shifting much later under growing political pressure. By the mid-1980s, when apartheid had become harder to defend internationally, the U.S. finally moved toward sanctions. So the factual position is that South African gold helped support both the apartheid economy and the wider Western monetary order, but Europe’s broader recovery still depended far more on American financing, industrial rebuilding, and revived trade than on gold alone.

The broader pattern is that post-1945 Western engagement in Africa was not mainly humanitarian or developmental in the modern sense. It was usually about oil, uranium, copper, bauxite, diamonds, shipping routes, and Cold War alignment. The methods differed by empire and by period — direct colonial rule, support for friendly regimes, financial leverage, security partnerships, and selective intervention — but the economic logic was consistent: keep access to strategic resources, keep hostile powers out, and preserve a global financial and industrial order centered on Western states. South Africa was central because of gold, but it was not unique; much of Africa was folded into the postwar Western system through resource extraction and geopolitical management.

In/direct Death Toll

Total the deaths in major conflicts, coups, and political orders where the U.S. had clear documented influence, a defensible conservative working total is roughly 11 to 12 million deaths.

That comes from adding the biggest widely cited cases: Korea at at least 2.5 million dead, Vietnam at about 3.3 million dead, Indonesia 1965–66 at roughly 500,000 to 1 million killed, Guatemala at about 200,000 killed or disappeared, Chile under Pinochet at roughly 2,600 to 3,400 executed or disappeared, Iran 1953 and the Shah-era repression linked to the coup in the hundreds to low thousands rather than hundreds of thousands, and the main post-9/11 U.S. war zones at 4.5 to 4.7 million total deaths direct and indirect.

Deaths from collapsed health systems, hunger, displacement, sanctions, destroyed infrastructure, and aid withdrawal rather than people killed directly in combat — the most defensible recent estimate tied to U.S. policy starts with Brown University’s finding of about 3.6 to 3.8 million indirect deaths in the main post-9/11 war zones linked to U.S. operations, and that is the strongest observed multi-country figure on the board.

Beyond that, the numbers rise sharply but become methodologically less clean: a 2025 Lancet Global Health study estimated 564,258 deaths per year associated with unilateral sanctions globally, though that is not a U.S.-only figure even if the U.S. is the dominant sanctions power their European allies share the blame.

2025–2026 modelling studies project that major U.S. foreign-aid cuts could contribute millions more deaths by 2030, including about 14.1 million under one USAID-focused scenario and up to 22.6 million under a broader severe global aid-defunding scenario, again with attribution that is partly U.S.-specific and partly shared with other donor cuts.

Modern US Warfare vs the UN

In the modern post-1945 system, the United States has often treated the UN less as a binding authority than as a forum it supports when useful and bypasses when inconvenient, but under Donald Trump that selective approach became much more explicit and confrontational.

Since returning to office in January 2025, Trump has again moved to pull the U.S. away from major multilateral bodies and agreements by stopping U.S. engagement with the UN Human Rights Council, continuing the halt to UNRWA funding, ordering withdrawal from the World Health Organization, restarting withdrawal from the Paris climate agreement, reviewing UNESCO, proposing to scrap or sharply cut UN peacekeeping funding, and leaving the UN carrying large U.S. arrears even as some partial payments were later discussed.

At the same time, Washington has continued to use its power inside the UN system very aggressively where it suits U.S. priorities, including vetoing Security Council ceasefire action on Gaza and even sanctioning a UN expert critical of Israel’s conduct. So the modern reality is not that the U.S. ignores the UN altogether; it is that under Trump the U.S. has shown a particularly thin, transactional form of adherence — willing to use the institution when it legitimizes American goals, but quick to defund, exit, paralyze, or punish parts of the system when they constrain them.

The “Good Guys”

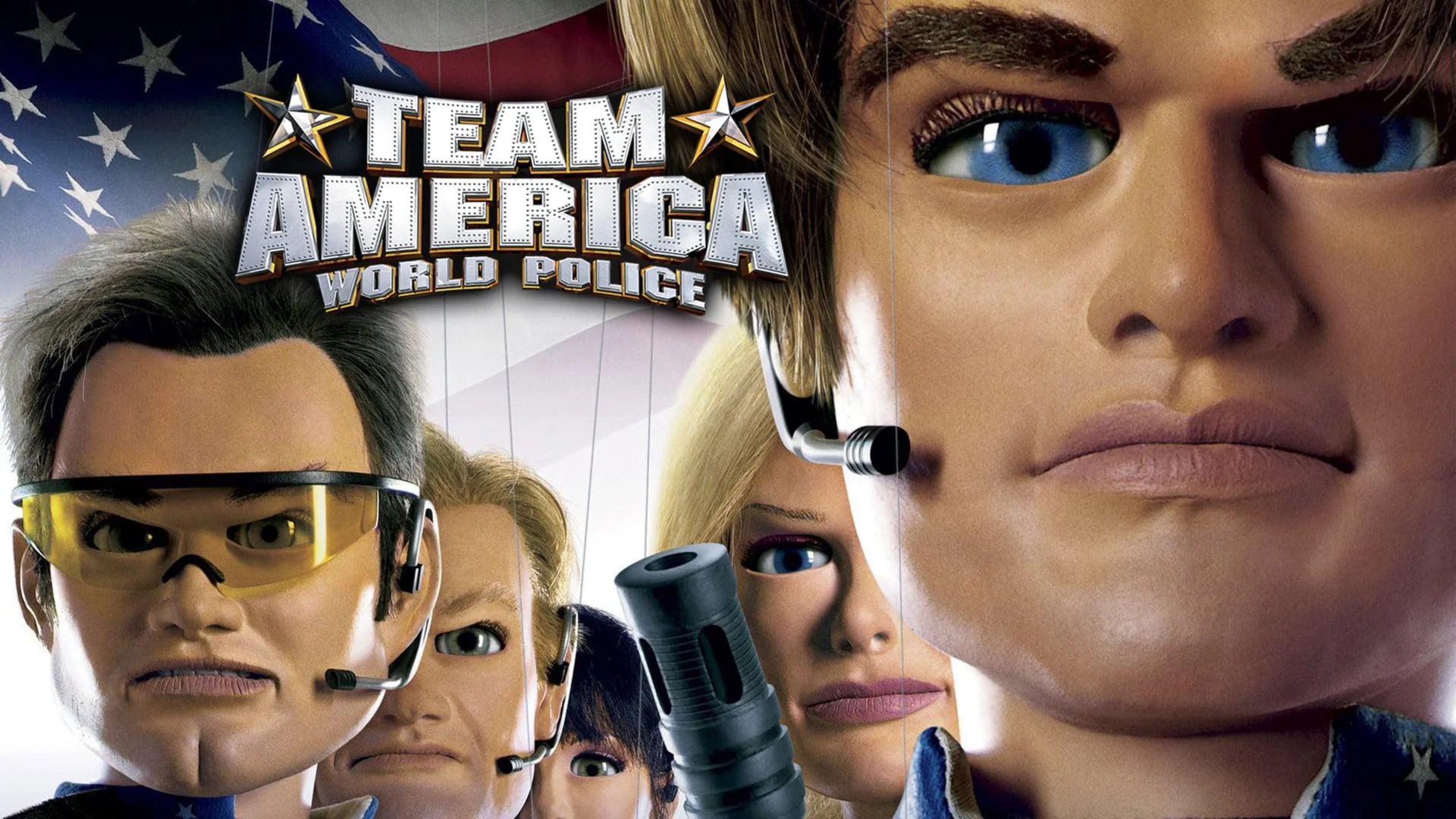

There is solid evidence that American film and television have long helped reinforce a “good America versus dangerous enemy” narrative, first through World War II and later through the post-9/11 War on Terror. The Pentagon has an openly documented relationship with Hollywood and has historically supported productions that align with U.S. military image goals, while decades of media research have shown that Arabs and Muslims were repeatedly stereotyped on screen as violent, fanatical, or terrorist threats. So the grounded version of the theory is not that every film is part of one central conspiracy, but that state influence, commercial storytelling, and repeated cultural stereotypes combined to embed a simplified psychological script in which America is cast as the protector and Muslims are often framed as the modern “enemy.”

The economic argument is that U.S. military expansion persists because it functions as a vast domestic economic system as much as a security system: with a FY2025 Defense Department request of about $849.8 billion and total 2024 military spending of roughly $997 billion, or 37% of global military expenditure, the military supports huge contractor networks, research pipelines, industrial jobs, regional economies, and political patronage across the country.

That makes it easier to sell to taxpayers not as endless war spending, but as employment, technology, local investment, and national insurance. At the same time, Americans consistently rank national security as their top foreign-policy priority, which helps keep the system politically durable even when the foreign interventions themselves are costly and controversial. In simple terms, the U.S. military machine survives economically because too many jobs, contracts, industries, and political incentives are tied to it, and psychologically because voters are told that global dominance is safer than pulling back.

It’s not only money or military power, but consistent repetition of stories and narratives that paint the belief systems that all human beings have.

Before World War II, propaganda was often blunt, state-led, and openly political. In the modern era, the same basic psychological conditioning is more diffuse, more polished, and far more constant, arriving through films, series, news cycles, streaming platforms, celebrity culture, video games, and social media. Over time, that steady entertainment-led bombardment teaches audiences who to fear, who to trust, who the “good guys” are, and which violence feels justified before they have consciously examined any of it.

That is how narrative power works in practice: not by forcing belief in one moment, but by normalising a worldview over years until military dominance, permanent enemies, and endless intervention begin to feel less like political choices and more like common sense.

The Left / Right

What this has produced inside the United States is a more extreme form of negative partisanship, where many people are motivated less by love for their own side than by fear and disgust toward the other.

In that environment, each camp is reduced to a hostile caricature: on the right, the left is painted as unpatriotic, sexually and culturally threatening, chaotic, and detached from reality; on the left, the right is painted as ignorant, authoritarian, violent, corrupt, and morally backward.

Those images are then reinforced inside fragmented media ecosystems, where partisan news, social platforms, and algorithmic feeds reward outrage, simplify complex issues, and mix real facts with distortion.

Research shows Americans are deeply polarized over politics and media trust, and even current issues like immigration and Iran break sharply along partisan lines, with Republicans and Democrats interpreting the same events through entirely different moral and informational frames. So the deeper problem is not just disagreement; it is that millions of Americans now consume politics through self-reinforcing echo chambers that train them to see the other side not as rivals in a democracy, but as an internal enemy.

A grounded way to frame this is that the conflict goes beyond ordinary U.S. left-right politics because it sits at the intersection of identity, religion, migration, security narratives, and great-power backing: for decades, parts of the American public were conditioned by media and politics to read the Middle East through a civilizational lens of “ally versus terrorist,” and after 9/11 that frame hardened around Muslims in particular, which made it easier for many Americans to dissociate themselves from the human consequences of U.S.-backed violence abroad.

In the Gaza case, it is factually correct that Israel’s campaign has been sustained by very large U.S. military support — Brown’s Costs of War project estimates at least $21.7 billion in U.S. military aid to Israel since October 2023 — while UN reporting documents continuing civilian casualties and devastation in Gaza. It is also true that international legal bodies have acted, but with limited coercive effect: the ICJ has issued provisional measures in South Africa’s genocide case against Israel, and ICC case records show arrest-warrant proceedings involving Benjamin Netanyahu and Yoav Gallant, yet neither process has stopped the war in practice. So the sharper conclusion is not that the UN has literally no function, but that it has repeatedly proven too weak to protect civilians when a heavily armed U.S.-backed ally is involved, and that this failure feeds the wider perception that Western power applies law and morality selectively rather than universally.

Politically and historically, South Africa has framed its role around its own anti-apartheid history and its post-1994 foreign-policy identity. South African officials have repeatedly said they view the Palestinian question through the lens of apartheid, racial oppression, and international solidarity, and that remaining silent would contradict both South Africa’s constitutional values and its international-law commitments. In September 2025, Minister Ronald Lamola said South Africa chose to act “in accordance with our constitutional values and international law obligations,” while Presidency and DIRCO statements have continued to present the case as part of a duty to prevent genocide and oppose impunity.

Controlling the Narrative

The broader problem is that major U.S. news and media platforms do not just report politics, they increasingly package it for these partisan audiences, which deepens the left-right divide by rewarding outrage, simplifying complex events into tribal storylines, and mixing real facts with selective framing and distortion.

Research shows Republicans and Democrats now trust very different news sources, while overall trust in national news has fallen sharply and low confidence in journalists is widespread.

At the same time, studies of partisan media find that repeated exposure can increase perceived polarization and lock people into echo chambers where the other side is no longer seen as wrong, but as dangerous or illegitimate. So the manipulation is often less about inventing everything from scratch and more about choosing which facts to amplify, which emotions to trigger, and which enemies to keep permanently in view until politics becomes a constant psychological battle rather than a shared public conversation.

Actual concrete evidence supports the view that war narratives in the Middle East are controlled, but the methods differ by actor:

Specifically in modern conflicts like Gaza where Israel has barred independent foreign journalists since October 2023, allowing only rare military-escorted visits, while major agencies including BBC, AFP, AP, and Reuters have publicly demanded access; at the same time, CPJ says Israel was responsible for two-thirds of all journalist killings worldwide in both 2024 and 2025, and cases such as the killing of Reuters journalist Issam Abdallah in Lebanon have been described by investigators and press-freedom groups as targeted or requiring war-crimes investigation.

In Iran, the pattern is less about foreign reporters being hit on the battlefield and more about state information control through journalist arrests, intimidation, and internet blackouts, including a near-total shutdown reported in January 2026 and RSF warnings in March 2026 that journalists were working under bombardment while facing pressure from the security state.

United States, the strongest evidence is not a clean 50-year pattern of systematically assassinating journalists, but rather a mix of lethal incidents, restricted access, and narrative management through systems like military embedding, whereas across the wider region many other states and armed groups have also used censorship, arrests, kidnappings, and killings to shape what the world sees.

So the grounded conclusion is that the narrative is being controlled, not by one single actor alone, but through a recurring regional logic: restrict access, intimidate reporters, shape coverage, and narrow what outside audiences are allowed to believe.

Weaponised Social Platfoms

Modern consumer technology has created vast private surveillance systems, and the hard fact is that states increasingly tap into them. In the United States, this does not mean Facebook or Google are openly “military-run,” but it does mean intelligence agencies can lawfully compel some provider assistance under Section 702, while ODNI’s 2024 framework openly acknowledges that the government also buys and uses commercially available information gathered by private companies from apps, phones, vehicles, and connected devices. Companies such as Palantir sit on the other side of that ecosystem, not as consumer platforms but as firms that turn large, fragmented datasets into operational intelligence for government and defense customers. So the proven reality is that modern platforms are commercial data-extraction systems first, and that makes them inherently valuable to intelligence and security institutions.

Europe’s response has been to treat these cross-border data flows as a sovereignty and civil-liberties problem. The clearest examples are the EU-linked enforcement actions against major platforms: in 2023, Ireland’s Data Protection Commission fined Meta €1.2 billion over Facebook data transfers from the EU/EEA to the United States after the Schrems II fallout, and in 2025 it fined TikTok €530 million over transfers of EEA user data to China and related transparency failures. That is why TikTok became so politically explosive: the controversy is not based on a clean public proof that Beijing has already weaponized the platform at scale, but on the documented reality that massive social platforms hold sensitive personal data and recommendation power, and regulators increasingly see foreign-state access risks as real rather than hypothetical.

China and Russia have responded by building more controlled domestic digital ecosystems, partly to avoid dependence on U.S.-linked platforms and partly to tighten internal control. That does not mean they escaped surveillance; it means they localized it. The grounded distinction, then, is this: the facts show that private tech firms collect extraordinary amounts of personal data, governments seek access through law, contracts, and data markets, and regulators increasingly view those flows as national-security issues. The speculation begins only when people jump from that to the claim that every major platform is a direct intelligence front. The truth is more structural and more credible: digital platforms function as giant behavioral-intelligence reservoirs, and states on all sides now treat them accordingly.

Financal Systems

United States is still on top, but it is no longer unchallenged in the way it was after World War II. The dollar remains dominant in the parts of the system that matter most: the IMF says global reserves were still about $13 trillion in 2025 and the dollar’s share changed only marginally; SWIFT’s February 2026 RMB tracker shows the yuan was only the 5th most active payment currency with a 3.13% share in January 2026, which is growth, but not near dollar replacement.

BRICS is real and larger than before — Brazil’s official BRICS site lists 11 member countries, including South Africa and Iran — and BRICS leaders and finance ministers are openly pushing local-currency financing, payment cooperation, and reduced dependence on the dollar system, but the official documents still read more like a long transition than a completed breakaway.

What has changed is not that the U.S. system has collapsed, but that its weak points are now visible to everyone. The biggest one is debt and deficit dependence: U.S. Treasury fiscal data says gross federal debt averaged 124% of GDP in fiscal 2025, and CBO projects debt held by the public rising from 101% of GDP in 2026 to 120% by 2036.

That does not mean immediate failure, because the U.S. still borrows in its own currency and sits at the center of global liquidity, but it does mean the system depends on continued confidence in Treasuries, dollar settlement, and U.S. institutions.

The Federal Reserve’s 2025 Financial Stability Reports also flagged familiar pressure points: elevated asset valuations, borrowing vulnerabilities, and funding risks. So the real danger is less “America loses tomorrow” and more “the world keeps building around America while America runs a more leveraged, politically brittle system than it used to.”

On the petrodollar point, the strongest fact-based version is that de-dollarization is happening at the margins, not at the core. Some oil and trade deals are being settled outside dollars more often than before, and BRICS countries are deliberately building alternatives, but the dollar still dominates reserves, payments plumbing, offshore funding, and global collateral use.

That is why headlines about Iran accepting yuan or countries trading in local currencies matter politically more than they yet matter systemically.

The U.S. remains powerful because it still controls the deepest bond market, the main reserve currency, key payment rails, and much of global risk pricing. The real weak points in the current global system are therefore not one dramatic trigger, but a cluster: U.S. debt saturation, overfinancialization, fragile confidence in sovereign debt markets, high asset-price dependence, geopolitically driven payment fragmentation, and the slow build-out of alternative blocs like BRICS. South Africa is relevant because it sits inside that BRICS push and the broader Global South realignment, but it is not leading a replacement system yet; it is participating in the attempt to create one.

The Eastern Front

Strategically, the U.S. turned Japan into its main forward military platform in East Asia: a demilitarized ally on paper, but in practice a heavily integrated base network for American air, naval, missile, and logistics power projecting into the western Pacific. Today Japan is still described by both Washington and Tokyo as a central pillar of Indo-Pacific deterrence, with expanding cooperation in ballistic missile defense, cyberspace, outer space, maritime security, and extended deterrence, while U.S. forces remain forward-deployed from places like Yokosuka and Okinawa and are now being linked to newer capabilities, including a stronger “denial defense posture” and even the 2025 deployment of the Typhon missile system to mainland Japan.

For Japanese people, that meant their country was rebuilt not just as an economy, but as a buffer, launch pad, and anchor state for U.S. military power against first the Soviet Union, then China, North Korea, and wider regional threats. Japan got security cover and room to focus on economic growth, but the trade-off was a long-term U.S. military presence on its soil, especially in Okinawa, and a strategic role defined heavily by American priorities. In blunt terms, the occupation did not just defeat Japan militarily; it repositioned Japan permanently inside a U.S.-led security architecture as the key unsinkable base of the American order in Asia.

Japan is strategically important to U.S. military interests not just because it hosts American bases, but because it also produces critical parts of the modern defense supply chain. Its biggest role is in semiconductor materials, specialty chemicals, wafers, sensors, power semiconductors, and chip-manufacturing equipment, all of which are essential for missiles, radar, satellites, aircraft, and secure communications. Japan is also becoming more important in actual defense production, including solid rocket motor manufacturing and parts of the military drone supply chain, while its cooperation with the U.S. on critical minerals and rare earths supports the batteries, magnets, electronics, and advanced systems used in modern weapons. In simple terms, Japan is not only a military base platform for the U.S., but also a trusted industrial partner helping supply the precision technology and materials that American military power now depends on.

This does not bode well with China

China and Japan have a deeply interdependent but distrustful relationship and central to this is the U.S occupation of the Eastern region. Economically, they still matter a great deal to each other through trade, manufacturing supply chains, and regional business ties. Politically and strategically, however, the relationship is tense: they clash over the East China Sea islands claimed by both sides, Japan is increasingly aligning with the U.S. on security and supply-chain resilience, and Beijing is reacting sharply to Japan’s military buildup and its stance on Taiwan. Recent examples include China’s coast guard expelling a Japanese fishing boat near the disputed islands, China imposing export controls on Japanese entities over what it called “remilitarisation,” and Japan deepening U.S. cooperation on rare earths and critical minerals to reduce dependence on China. So the plain answer is that China and Japan are neither friends nor enemies in the simple sense — they are major neighbours tied together by commerce, but divided by history, territory, and the growing military rivalry shaping Asia.